Open-Source Local AI Models Registry

A verified reference of every open-weight AI model worth running locally, organized by your GPU's VRAM. Every URL points to a live HuggingFace page. Every VRAM placement is validated against actual parameter counts and quantization requirements. Every license restriction is disclosed.

For the narrative walkthrough with hardware recommendations and first-project suggestions, see our Complete Hardware Guide for 2026.

LLMs (Chat / Reasoning)

Tier 1: ~8GB VRAM

Model | Params | VRAM (Q4) | License | Context | HuggingFace | Notes |

|---|---|---|---|---|---|---|

Qwen3.5-4B | ~5B hybrid | ~5.5GB | Apache 2.0 | 262K (1M YaRN) | RECOMMENDED. Multimodal (text/image/video). Supersedes Qwen3-4B. Feb 2026. | |

Qwen3-8B | 8.2B dense | ~4.6GB | Apache 2.0 | 32K (131K YaRN) | Best pure text reasoning at this tier. ~40 tok/s. | |

Nemotron-3-Nano-4B | 3.97B | ~2.5GB | NVIDIA Open | 262K | Mamba2 hybrid. Edge-optimized (Jetson, mobile). Mar 2026. | |

Phi-4-mini | 3.8B dense | ~2.5GB | MIT | 128K | Most permissive license. Strong math/logic. Older (Feb 2025). |

Tier 2: ~16GB VRAM

Model | Params (total/active) | VRAM (Q4) | License | Context | HuggingFace | Notes |

|---|---|---|---|---|---|---|

Qwen3.5-35B-A3B | 35B / 3B active | ~15GB (Q3) | Apache 2.0 | 262K (1M YaRN) | RECOMMENDED. MoE. Needs Q3/IQ3 to fit 16GB. Multimodal. Mar 2026. | |

Qwen3-30B-A3B | 30.5B / 3.3B active | ~15GB | Apache 2.0 | 32K (131K YaRN) | MoE. Fits 16GB at Q4 cleanly. Coder variant: Qwen/Qwen3-Coder-30B-A3B-Instruct. | |

Gemma 3 12B | 12B dense | ~7GB | Gemma ToU | 128K | Multimodal. 140+ languages. Google-backed. Mar 2025. | |

Qwen2.5-VL-7B | 7B dense | ~5GB | Apache 2.0 | 32K | Strong vision-language. Superseded by Qwen3.5-4B for most tasks. |

Excluded from this tier:

- Mistral Small 4 (119B MoE, 22B active) — needs ~60-70GB at Q4. Does not fit 16GB.

- GLM-4.5-Air (106B MoE, 12B active) — needs ~24GB at INT4. Belongs in Tier 3.

Tier 3: ~24GB VRAM

Model | Params (total/active) | VRAM (Q4) | License | Context | HuggingFace | Notes |

|---|---|---|---|---|---|---|

Qwen3.5-27B | 27.8B dense | ~17GB | Apache 2.0 | 262K (1M YaRN) | RECOMMENDED. Multimodal. All params active. MMLU-Pro 86.1. Feb 2026. | |

GLM-4.7-Flash | 31B / 3B active | ~18-20GB | MIT | 200K | Best coding (SWE-bench 59.2%). MoE. Jan 2026. | |

GLM-4.5-Air | 106B / 12B active | ~24GB (INT4) | MIT | 128K | Tight fit at INT4 on 24GB. Massive knowledge capacity. | |

Mistral Small 3.2 | 24B dense | ~14-15GB | Apache 2.0 | 128K | Best tool calling. Vision. Jun 2025. |

Excluded from this tier:

- GLM-4.7 full (358B MoE) — multi-GPU only. Use GLM-4.7-Flash instead.

- Mistral Large 3 (675B MoE, 41B active) — needs ~340GB at Q4. Enterprise only.

Quick Reference

VRAM | Best General Chat | Best for Coding | Best Multimodal |

|---|---|---|---|

8GB | Qwen3.5-4B (Q4) | Qwen3-8B (Q4) | Qwen3.5-4B (Q4) |

16GB | Qwen3.5-35B-A3B (Q3) | Qwen3-Coder-30B-A3B (Q4) | Qwen3.5-35B-A3B (Q3) |

24GB | Qwen3.5-27B (Q4) | GLM-4.7-Flash (Q4) | Qwen3.5-27B (Q4) |

TTS (Text-to-Speech)

Tier 1: CPU / ~8GB VRAM

Model | Params | VRAM | License | Languages | HuggingFace | Notes |

|---|---|---|---|---|---|---|

Kokoro-82M | 82M | CPU or ~2-3GB | Apache 2.0 | 8 (54 voices) | 96x real-time. 9.4M+ downloads/month. No voice cloning. | |

Piper TTS | 5-30M/voice | CPU only | MIT | 30+ (100+ voices) | Runs on Raspberry Pi. Archived Oct 2025; fork: OHF-Voice/piper1-gpl (GPL-3.0). | |

MeloTTS | ~small | CPU | MIT | 6 (multi-accent EN) | GitHub: myshell-ai/MeloTTS. Language-specific repos on HF. |

Tier 2: ~16GB VRAM

Model | Params | VRAM | License | Languages | HuggingFace | Notes |

|---|---|---|---|---|---|---|

Qwen3-TTS | 0.6B / 1.7B | ~2GB / ~16GB | Apache 2.0 | 10 | RECOMMENDED. Voice cloning (3s audio). VoiceDesign variant. 1.67M downloads. Jan 2026. | |

CosyVoice3 0.5B | 0.5B | ~4-8GB | Apache 2.0 | 9 + 18 CN dialects | Zero-shot voice cloning. 150ms streaming. Successor to CosyVoice2. | |

Chatterbox-Turbo | 350M | ~4.5GB | MIT | EN (Turbo) / 23+ (Multilingual) | Voice cloning. Emotion control. Multilingual: ResembleAI/chatterbox. | |

Voxtral-4B | 4B | >=16GB | CC BY-NC 4.0 | 9 | NON-COMMERCIAL. 70ms latency. 20 preset voices. Mar 2026. | |

XTTS-v2 | 467M | <10GB | CPML (NC) | 17 | NON-COMMERCIAL. Coqui AI defunct (2024). Voice cloning from 6s clip. Unmaintained. | |

Fish Speech 1.5 | ~1B | ~12GB | CC-BY-NC-SA | 13 | NON-COMMERCIAL. Top TTS Arena ELO (1339). Best quality regardless of license. |

Tier 3: ~24GB VRAM

Model | Params | VRAM | License | Languages | HuggingFace | Notes |

|---|---|---|---|---|---|---|

Higgs Audio V2 | 6B total | ~24GB | Llama-deriv (<100K users) | 50+ claimed | Most expressive. Multi-speaker dialogue. Music + speech. | |

Dia2 | 1-2B | ~12-24GB | Apache 2.0 | EN only | Dialogue/podcast specialist. Multi-speaker. |

TTS License Summary

Model | Commercial OK? |

|---|---|

Kokoro-82M | YES (Apache 2.0) |

Piper TTS | YES (MIT) / Conditional (GPL fork) |

MeloTTS | YES (MIT) |

Qwen3-TTS | YES (Apache 2.0) |

CosyVoice3 | YES (Apache 2.0) |

Chatterbox | YES (MIT) |

Voxtral-4B | NO (CC BY-NC 4.0) |

XTTS-v2 | NO (CPML, company defunct) |

Fish Speech 1.5 | NO (CC-BY-NC-SA) |

Higgs Audio V2 | CONDITIONAL (<100K users) |

Dia2 | YES (Apache 2.0) |

STT (Speech-to-Text / ASR)

Tier 1: ~8GB VRAM

Model | Params | VRAM | WER | License | Languages | HuggingFace | Notes |

|---|---|---|---|---|---|---|---|

IBM Granite 4.0 1B | 2B | ~4GB | 5.52% | Apache 2.0 | 6 (EN,FR,DE,ES,PT,JA) | #1 Open ASR Leaderboard. Edge-deployable. Keyword biasing. Mar 2026. | |

Cohere Transcribe | 2B | ~4-6GB | 5.42% | Apache 2.0 | 14 | Conformer-based. 500K hrs training. Mar 2026. | |

Whisper Large V3 Turbo | 809M | ~3-6GB | ~7.75% | MIT | 99 | Best language breadth. Massive ecosystem. 6.3x faster than full V3. | |

Qwen3-ASR-1.7B | 2B | ~6-8GB | SOTA (many benchmarks) | 52 (30 + 22 CN dialects) | Best CJK support. Singing voice transcription. Offline + streaming. Feb 2026. | ||

Distil-Whisper Large V3 | 756M | ~5GB | MIT | EN only | 6.3x faster than Whisper V3. Within 1% WER. Also: distil-large-v3.5. |

Tier 2: ~16GB VRAM

Model | Params | VRAM | WER | License | Languages | HuggingFace | Notes |

|---|---|---|---|---|---|---|---|

Canary-Qwen 2.5B | 2.5B | ~8-10GB | 5.63% | CC-BY-4.0 | EN (reliable) | SALM arch — can summarize/QA about transcripts. 234K hrs training. | |

Parakeet TDT 1.1B | 1.1B | ~4-7GB | ~8% | CC-BY-4.0 | EN | Fastest (RTFx >2000). Best for batch/throughput. | |

Granite Speech 3.3 8B | ~9B | ~18GB | 5.85% | Apache 2.0 | 5 + translation | Best noise robustness. Multilingual ASR + translation. |

Tier 3: ~24GB VRAM

Model | Params | VRAM | WER | License | Languages | HuggingFace | Notes |

|---|---|---|---|---|---|---|---|

Whisper Large V3 | 1.55B | ~6-10GB | ~7.4% | Apache 2.0 | 99 | Ecosystem standard. Widest tooling. Actually fits in mid-range. |

STT Quick Picks

Need | Best Pick |

|---|---|

Best accuracy (EN) | IBM Granite 4.0 1B or Cohere Transcribe (~5.5% WER, both Apache 2.0) |

Most languages (99) | Whisper Large V3 Turbo |

Best CJK | Qwen3-ASR-1.7B (52 languages) |

Fastest throughput | Parakeet TDT 1.1B (RTFx >2000) |

Edge/low-power | IBM Granite 4.0 1B (~4GB, runs on Apple Silicon) |

Vision (VLMs)

Tier 1: ~8GB VRAM

Model | Params | VRAM | License | Capabilities | HuggingFace | Notes |

|---|---|---|---|---|---|---|

Qwen3-VL-2B | 2B | ~4-5GB | Apache 2.0 | Image, video, OCR (32 langs), GUI agent, 3D grounding | RECOMMENDED. Most capable at this size. 2.5M+ downloads/month. No 3B version exists. | |

Ministral-3-3B | 3.8B total | ~8GB (FP8) | Apache 2.0 | Image, text, function calling | Confirmed vision via 0.4B vision encoder. 256K context. No video. | |

SmolVLM2-2.2B | 2.2B | ~5.2GB | Apache 2.0 | Image, video, OCR, document analysis | Best video at this size. HuggingFace-built. | |

Molmo-7B (Q4) | ~8B | ~5GB (Q4) | Apache 2.0 | Image, pointing/grounding | Unique pointing architecture. Open weights AND data. |

Tier 2: ~16GB VRAM

Model | Params | VRAM | License | Capabilities | HuggingFace | Notes |

|---|---|---|---|---|---|---|

Qwen2.5-VL-7B | 7B | ~14-16GB | Apache 2.0 | Image, video (1hr+), OCR (864 score), GUI agent, docs | Best document understanding (DocVQA 95.7). Also consider: Qwen3-VL-8B. | |

InternVL3-8B | ~7.3B | ~16GB (8GB Q8) | MIT | Image, video, OCR, GUI, 3D, industrial, 100+ langs | Broadest capability set. Also: InternVL3.5-8B. | |

Pixtral-12B | 12.4B | ~10GB (Q4) | Apache 2.0 | Image (multi), docs, charts, code gen | Natively multimodal. 128K context. Strong code + vision. |

Tier 3: ~24GB VRAM

Model | Params | VRAM (Q4) | License | Capabilities | HuggingFace | Notes |

|---|---|---|---|---|---|---|

Qwen3-VL-32B | 33B | ~18-20GB | Apache 2.0 | Image, video, OCR (32 langs), GUI, 3D, coding | RECOMMENDED. Most capable open VLM. Needs Q4 to fit 24GB. | |

GLM-4.6V-Flash | 9B | ~7GB (INT4) | MIT | Image, video, OCR, function calling | First VLM with native vision-driven function calling. Fits 16GB too. |

Does not exist: Qwen3-VL-72B. The Qwen3-VL family jumps from 32B dense to 235B-A22B MoE. The 72B exists only in the older Qwen2.5-VL generation.

Image Generation

Low-End (~8-13GB VRAM)

Model | Params | VRAM | License | HuggingFace | Notes |

|---|---|---|---|---|---|

SD 3.5 Medium | 2.5B | ~6-10GB | Community (<$1M free) | Runs on almost anything. Good typography. Multi-resolution. | |

FLUX.2 klein 4B | 4B | ~13GB | Apache 2.0 | Sub-second inference. Generation + editing. Best Apache 2.0 option. | |

FLUX.1 schnell | 12B | 8-24GB (8GB Q4) | Apache 2.0 | Previous gen, still 733K+ downloads/month. 1-4 steps. |

High-End (~24GB VRAM)

Model | Params | VRAM | License | HuggingFace | Notes |

|---|---|---|---|---|---|

SD 3.5 Large Turbo | 8.1B | ~11-24GB | Community (<$1M free) | 4-step fast inference. | |

Qwen-Image | 20B | ~24GB | Apache 2.0 | Best Apache 2.0 quality. Editing + text rendering (EN/CN). | |

FLUX.2-dev | 32B | ~24GB (Q4) | Non-Commercial | NON-COMMERCIAL. Best raw quality. Editing + combining. |

Note: There is no "FLUX.2-schnell." FLUX.2 uses: klein (fast), dev, flex, pro. FLUX.1 used: schnell, dev, pro.

All URLs verified against live HuggingFace pages on March 28, 2026. VRAM requirements validated against actual parameter counts, not marketing claims. Models and benchmarks change fast. We will update this registry as new releases change the picture.

Related Posts

How to migrate from Zapier to Make or n8n

I migrated 47 Zaps last year. Took me three weeks, about 40 hours of actual work, and I broke two production workflows along the way. One was a Stripe webhook that silently stopped firing for 36 ho...

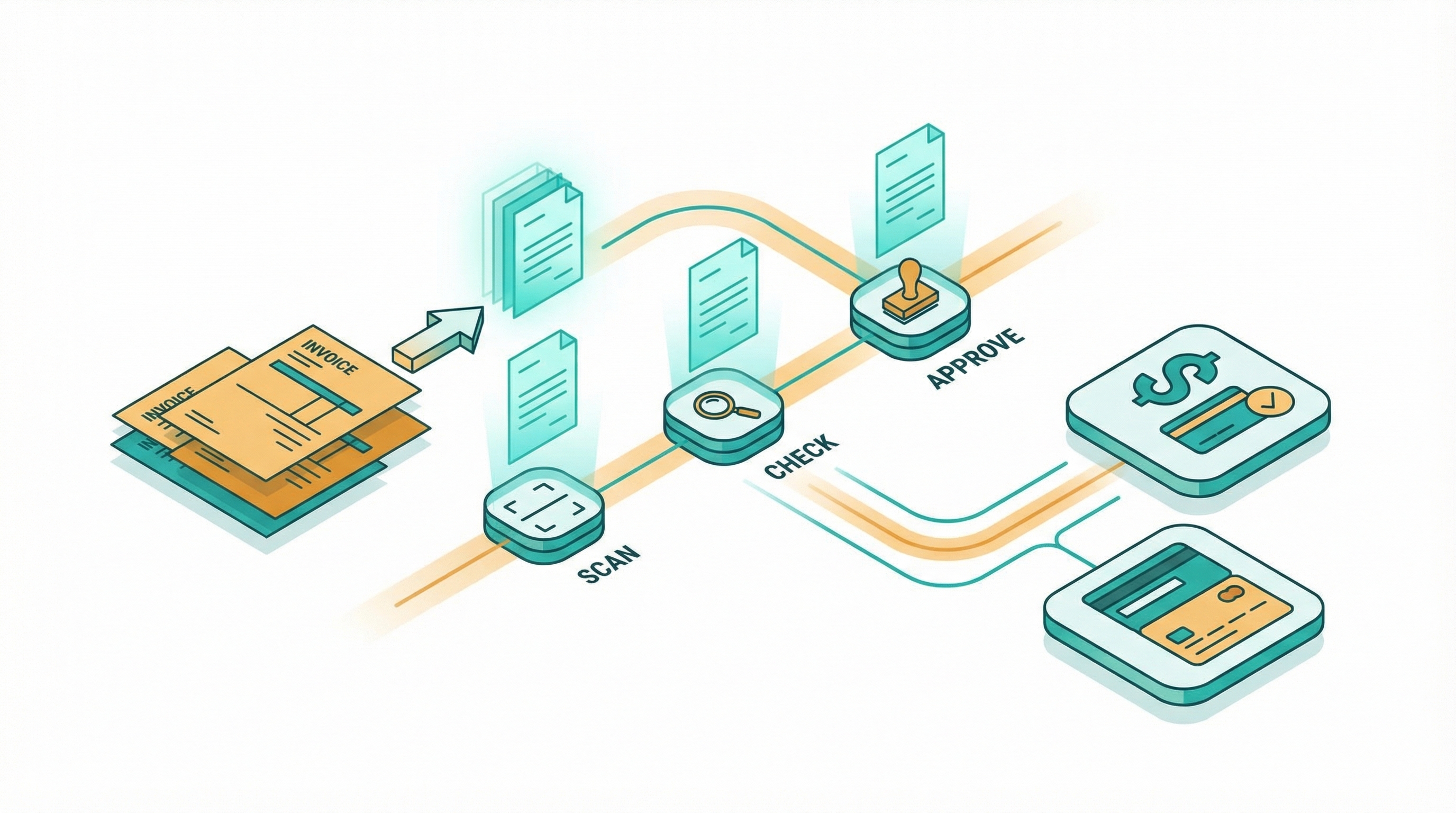

How to automate invoice processing without code

I spent two years manually processing invoices for a consulting business. Every Friday afternoon looked the same: download PDFs from email, retype line items into QuickBooks, cross-check amounts ag...

How to self-host n8n when you are not an engineer

I self-hosted n8n on an $8 VPS last month. The whole thing took about 45 minutes, and I'm not a sysadmin or someone who runs servers for a living. I just got tired of watching my n8n Cloud bill gro...