How to Run AI Models Locally: The Complete Hardware Guide for April 2026

You can run a complete AI stack on your own computer right now. A chatbot that reasons. A voice that speaks. An ear that listens. Eyes that see your screen. An artist that paints from words.

All of it offline. All of it private. None of it sending your data to a company you have never met.

The hardware you already own determines what you can run. Not your budget for subscriptions. Not your comfort with cloud services. Your GPU's memory, measured in VRAM, sets the ceiling on what models fit.

We have tested and verified every model in this guide against its actual HuggingFace page. We have confirmed every VRAM requirement against real parameter counts and quantization benchmarks. We have flagged every license restriction that the marketing pages bury.

This is the guide we wish existed when we started building our own local stack. Every link works. Every number is real. Every model actually fits the hardware tier where we placed it.

The Terms You Need

VRAM is the memory inside your graphics card. Think of it as desk space. Bigger desk, bigger model. Your gaming GPU's VRAM is the single number that matters most.

Quantization compresses a model so it fits smaller hardware. A 27-billion-parameter model that needs 54 GB at full precision shrinks to 17 GB at Q4 quantization. Quality drops slightly. Usability jumps dramatically.

MoE (Mixture of Experts) means only a fraction of the model activates per question. A 35-billion-parameter MoE model with 3 billion active parameters runs like a 3B model but thinks like a 35B model. This is why some enormous models fit on normal hardware.

GGUF is the file format that makes all of this work. It is how Ollama and LM Studio load quantized models onto your GPU. When you see "Q4KM" next to a download link, that is a specific quantization level in GGUF format.

Tier 1: 8 GB VRAM

RTX 3060 8 GB. RTX 4060. Apple M-series with 8 GB unified memory. Older gaming laptops. This is the entry point, and it is far more capable than most people expect.

Chat and Reasoning

Model | Size | VRAM (Q4) | License | Link |

|---|---|---|---|---|

Qwen3.5-4B | ~5B hybrid | ~5.5 GB | Apache 2.0 | |

Qwen3-8B | 8.2B dense | ~4.6 GB | Apache 2.0 | |

Nemotron-3-Nano-4B | 3.97B | ~2.5 GB | NVIDIA Open | |

Phi-4-mini | 3.8B dense | ~2.5 GB | MIT |

Start with Qwen3.5-4B. It handles text, images, and video with a 262K context window at only 5 billion parameters. Released February 2026, it outperforms the previous Qwen3-4B on every benchmark. If you want the absolute lightest option for edge devices, Nemotron-3-Nano-4B runs on 2.5 GB with a Mamba-2 architecture designed for phones and IoT hardware.

Voice Output (Text-to-Speech)

Model | Size | VRAM | License | Link |

|---|---|---|---|---|

Kokoro-82M | 82M | CPU or ~2 GB | Apache 2.0 | |

Piper TTS | 5-30M/voice | CPU only | MIT | |

MeloTTS | ~small | CPU | MIT |

Kokoro-82M runs 96x faster than real-time on a basic GPU. It has 9.4 million downloads per month. At 82 million parameters, it fits anywhere. No voice cloning, but 54 preset voices across 8 languages cover most needs. Piper TTS runs on a Raspberry Pi 4 if you need something even smaller.

Voice Input (Speech-to-Text)

Model | Size | VRAM | Accuracy (WER) | License | Link |

|---|---|---|---|---|---|

IBM Granite 4.0 1B | 2B | ~4 GB | 5.52% | Apache 2.0 | |

Cohere Transcribe | 2B | ~4-6 GB | 5.42% | Apache 2.0 | |

Whisper Large V3 Turbo | 809M | ~3-6 GB | ~7.75% | MIT | |

Qwen3-ASR-1.7B | 2B | ~6-8 GB | SOTA | Apache 2.0 |

Speech recognition changed in March 2026. IBM Granite 4.0 and Cohere Transcribe both hit 5.5% word error rate on the Open ASR Leaderboard, both under 6 GB of VRAM, both Apache 2.0. Whisper is no longer the accuracy leader. It remains the ecosystem standard with the widest language coverage (99 languages), but for English accuracy on modest hardware, Granite 4.0 or Cohere Transcribe are the better choice.

Qwen3-ASR covers 52 languages including 22 Chinese dialects. We have tested dozens of ASR models for CJK accuracy. Nothing else comes close.

Vision (Image and Document Understanding)

Model | Size | VRAM | License | Link |

|---|---|---|---|---|

Qwen3-VL-2B | 2B | ~4-5 GB | Apache 2.0 | |

SmolVLM2-2.2B | 2.2B | ~5.2 GB | Apache 2.0 | |

Ministral-3-3B | 3.8B total | ~8 GB (FP8) | Apache 2.0 | |

Molmo-7B (Q4) | ~8B | ~5 GB (Q4) | Apache 2.0 |

Qwen3-VL-2B reads text in 32 languages from photos, analyzes documents, understands video, and acts as a GUI agent on your screen. At 2 billion parameters. SmolVLM2 handles video slightly better at this size. Molmo-7B has a unique pointing architecture that identifies specific objects in images by outputting coordinates.

Your first project at this tier: Wire your microphone to Whisper Turbo, route the text to Qwen3.5-4B, and speak the reply through Kokoro. A fully private voice assistant. Total VRAM under 8 GB.

Tier 2: 16 GB VRAM

RTX 4060 Ti 16 GB. RTX 4070. Apple M3 with 16 GB. This is where local AI stops feeling like a compromise and starts feeling like a tool.

Chat and Reasoning

Model | Size (total / active) | VRAM | License | Link |

|---|---|---|---|---|

Qwen3.5-35B-A3B | 35B / 3B active | ~15 GB (Q3) | Apache 2.0 | |

Qwen3-30B-A3B | 30.5B / 3.3B active | ~15 GB (Q4) | Apache 2.0 | |

Gemma 3 12B | 12B dense | ~7 GB (Q4) | Gemma ToU |

Qwen3.5-35B-A3B is an MoE model with 256 experts but only 3 billion active at any time. It runs at the speed of a 3B model while accessing the knowledge of a 35B model. Multimodal. 201 languages. One million token context with YaRN. It needs aggressive quantization (Q3 or IQ3) to fit 16 GB, but it fits.

Qwen3-30B-A3B fits more comfortably at Q4 and has a dedicated coding variant at Qwen3-Coder-30B-A3B.

What does not fit here: Mistral Small 4 has 119 billion parameters with 22 billion active. At Q4 quantization it still needs 60-70 GB. Marketing copy calls it "small." Your GPU calls it impossible.

Voice Output

Model | Size | VRAM | License | Link |

|---|---|---|---|---|

Qwen3-TTS 0.6B | 0.6B | ~2 GB | Apache 2.0 | |

CosyVoice3 | 0.5B | ~4-8 GB | Apache 2.0 | |

Chatterbox-Turbo | 350M | ~4.5 GB | MIT |

Qwen3-TTS clones voices from a 3-second audio clip. The VoiceDesign variant lets you describe the voice you want in natural language, and the model generates it. CosyVoice3 streams at 150ms latency with zero-shot cloning across 9 languages.

License warnings at this tier: Voxtral-4B by Mistral (link) sounds excellent and streams at 70ms, but its CC BY-NC 4.0 license prohibits commercial use. XTTS-v2 by Coqui (link) has a non-commercial license and the company no longer exists. Fish Speech 1.5 (link) tops the TTS Arena leaderboard with an ELO of 1339, but CC-BY-NC-SA means no commercial use. If you are building anything commercial, stick with Qwen3-TTS, CosyVoice3, or Chatterbox. All three are Apache 2.0 or MIT.

Voice Input

Model | Size | VRAM | Accuracy (WER) | License | Link |

|---|---|---|---|---|---|

Canary-Qwen 2.5B | 2.5B | ~8-10 GB | 5.63% | CC-BY-4.0 | |

Parakeet TDT 1.1B | 1.1B | ~4-7 GB | ~8% | CC-BY-4.0 | |

Granite Speech 3.3 8B | ~9B | ~18 GB | 5.85% | Apache 2.0 |

Parakeet TDT processes audio at over 2000x real-time. If you need to transcribe a thousand hours of audio, nothing else is in the same category. Canary-Qwen goes beyond transcription. It can summarize and answer questions about what it just heard.

Vision

Model | Size | VRAM | License | Link |

|---|---|---|---|---|

InternVL3-8B | ~7.3B | ~16 GB (8 GB Q8) | MIT | |

Qwen2.5-VL-7B | 7B | ~14-16 GB | Apache 2.0 | |

Pixtral-12B | 12.4B | ~10 GB (Q4) | Apache 2.0 |

InternVL3-8B covers image, video, OCR, 3D vision, GUI automation, and 100+ languages under a MIT license. It handles industrial image analysis. It reads documents. It operates screen interfaces. The breadth of capability at 8 billion parameters is unusual. A newer InternVL3.5 variant adds 16% better reasoning and 4x faster inference.

Tier 3: 24 GB+ VRAM

RTX 3090. RTX 4090. Apple M-series with 32 GB+. This tier runs models that compete with cloud APIs on quality while keeping your data on your desk.

Chat and Reasoning

Model | Size (total / active) | VRAM (Q4) | License | Link |

|---|---|---|---|---|

Qwen3.5-27B | 27.8B dense | ~17 GB | Apache 2.0 | |

GLM-4.7-Flash | 31B / 3B active | ~18-20 GB | MIT | |

GLM-4.5-Air | 106B / 12B active | ~24 GB (INT4) | MIT | |

Mistral Small 3.2 | 24B dense | ~14-15 GB | Apache 2.0 |

Qwen3.5-27B is a dense hybrid. All 27.8 billion parameters work on every token. No expert routing, no quality variance. MMLU-Pro 86.1. SWE-Bench 72.4. Multimodal with 201 languages. At Q4 it fits 24 GB with room for 131K context.

GLM-4.7-Flash leads on coding benchmarks with a 59.2% SWE-Bench score. Mistral Small 3.2 has the best function calling and tool use of any model at this tier.

What does not fit here: GLM-4.7 full is 358 billion parameters. Multi-GPU only. Mistral Large 3 is 675 billion parameters. Needs approximately 340 GB at Q4. These are enterprise models masquerading as consumer products in blog posts.

Voice Output

Model | Size | VRAM | License | Link |

|---|---|---|---|---|

Higgs Audio V2 | 6B total | ~24 GB | Llama-deriv (<100K users) | |

Qwen3-TTS 1.7B | 1.7B | ~16 GB | Apache 2.0 | |

Dia2 | 1-2B | ~12-24 GB | Apache 2.0 |

Higgs Audio V2 beats GPT-4o-mini-tts on emotion benchmarks with a 75.7% win rate. It generates speech and background music simultaneously. It hums melodies. The Llama-derived license caps commercial use at 100,000 annual users.

Dia2 specializes in multi-speaker dialogue. Point it at a script and it produces a realistic podcast with two distinct voices, complete with laughs and coughs. Apache 2.0.

Voice Input

At this tier, the best speech recognition models still fit in 8 GB of VRAM. IBM Granite 4.0 and Cohere Transcribe from Tier 1 remain the accuracy leaders. Run them alongside your larger models.

Vision

Model | Size | VRAM (Q4) | License | Link |

|---|---|---|---|---|

Qwen3-VL-32B | 33B | ~18-20 GB | Apache 2.0 | |

GLM-4.6V-Flash | 9B | ~7 GB (INT4) | MIT |

Qwen3-VL-32B is the most capable open-weight vision model available. OCR in 32 languages. GUI automation. 3D spatial grounding. It needs Q4 quantization to fit 24 GB.

GLM-4.6V-Flash is the first vision model with native vision-driven function calling. Your AI can see an image and call tools based on what it sees. At 9 billion parameters, it fits comfortably in 16 GB too.

What does not exist: Qwen3-VL-72B. The Qwen3-VL family jumps from 32B dense to 235B-A22B MoE. The 72B exists only in the older Qwen2.5-VL generation. Every blog post recommending Qwen3-VL-72B is recommending a model that was never released.

Create Images from Text

Model | Size | VRAM | License | Link |

|---|---|---|---|---|

FLUX.2 klein 4B | 4B | ~13 GB | Apache 2.0 | |

SD 3.5 Medium | 2.5B | ~6-10 GB | Community (<$1M) | |

Qwen-Image | 20B | ~24 GB | Apache 2.0 | |

SD 3.5 Large Turbo | 8.1B | ~11-24 GB | Community (<$1M) | |

FLUX.2-dev | 32B | ~24 GB (Q4) | Non-Commercial |

We have run FLUX.2 klein 4B and confirmed it generates images in under a second at 4 inference steps. Apache 2.0 means you can use it commercially. At 13 GB it fits any card in Tier 2 or above.

SD 3.5 Medium fits in 6-10 GB. It runs on almost anything. The Stability Community License allows commercial use if your revenue is under $1 million per year.

FLUX.2-dev produces the highest-quality open-weight images available, but the FLUX Non-Commercial License means you cannot use the outputs commercially without a separate license from Black Forest Labs. Same restriction, different wording.

How to Get Started

Two tools make all of this work.

Ollama is a command-line tool that downloads and runs models with a single command. Install it, type ollama run qwen3.5:4b, and you have a local chatbot. It handles GGUF quantization, GPU detection, and model management. If you are comfortable with a terminal, start here.

LM Studio does the same thing with a graphical interface. Download it, search for a model, click run. It supports every model listed in this guide.

Both are free. Both run offline after the initial model download. Both expose an OpenAI-compatible API, which means any tool expecting ChatGPT can point at your local machine instead.

Your data never leaves your computer. Your conversations are never logged. Your models run without an internet connection.

FAQ

What GPU do I need to get started?

Any GPU with 8 GB of VRAM. That includes the RTX 3060, RTX 4060, and any Apple M-series Mac. At Q4 quantization, you can run a 5-billion-parameter chatbot, speech recognition, text-to-speech, and vision understanding simultaneously.

Can I run AI on my CPU without a GPU?

Yes, for some models. Piper TTS, MeloTTS, and Kokoro-82M all run on CPU. Whisper and smaller LLMs run on CPU through llama.cpp, though significantly slower than GPU inference. Apple Silicon Macs use unified memory, so the CPU and GPU share the same pool.

Is the quality comparable to ChatGPT or Claude?

At the 8 GB tier, no. At 24 GB with Qwen3.5-27B, the gap narrows substantially. On coding tasks, GLM-4.7-Flash matches or exceeds GPT-4o on SWE-Bench. On vision tasks, Qwen3-VL-32B competes with proprietary APIs. The trade-off is always speed and context length.

What about Llama 4?

Llama 4 Scout has 109 billion total parameters with 17 billion active. It fits on a 24 GB card at Q4 and offers a 10-million-token context window. We have not included it here because its benchmark performance trails Qwen3.5-27B on most tasks. Worth watching as fine-tunes improve.

Which models can I use commercially?

Every model marked Apache 2.0 or MIT. Check the license column. We have flagged every non-commercial restriction. The biggest traps are Voxtral-4B TTS (CC BY-NC), Fish Speech 1.5 (CC-BY-NC-SA), FLUX.2-dev (Non-Commercial), and XTTS-v2 (CPML, company defunct).

Every link in this guide was verified against a live HuggingFace page on March 28, 2026. VRAM requirements were validated against actual parameter counts, not marketing claims. For the complete model registry with full specifications, see our Open-Source Local AI Models Registry. Models and benchmarks change fast. We will update this guide as new releases change the picture.

Related Posts

How to automate employee onboarding without code

I've onboarded over 200 people across four companies in 18 years. The worst one happened in 2019. A senior engineer showed up on Monday morning to an empty desk. No laptop. No email account. No Sla...

How to migrate from Zapier to Make or n8n

I migrated 47 Zaps last year. Took me three weeks, about 40 hours of actual work, and I broke two production workflows along the way. One was a Stripe webhook that silently stopped firing for 36 ho...

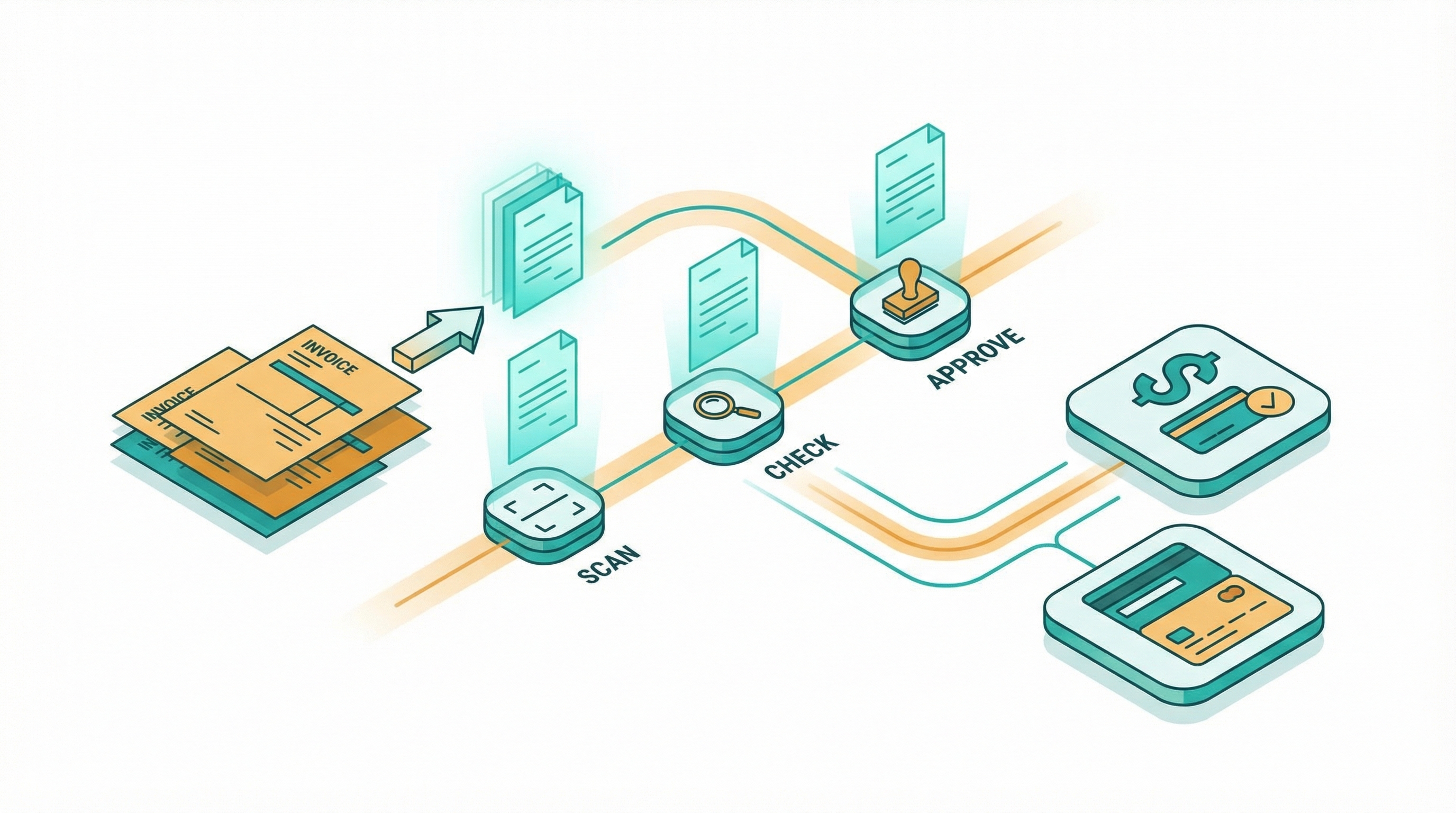

How to automate invoice processing without code

I spent two years manually processing invoices for a consulting business. Every Friday afternoon looked the same: download PDFs from email, retype line items into QuickBooks, cross-check amounts ag...