How to self-host n8n when you are not an engineer

I self-hosted n8n on an $8 VPS last month. The whole thing took about 45 minutes, and I'm not a sysadmin or someone who runs servers for a living. I just got tired of watching my n8n Cloud bill grow every time I added a workflow.

Here is what pushed me over: n8n's self-hosted Community Edition is completely free. Unlimited workflows. Unlimited executions. No per-run charges. The only cost is a cheap server and the time it takes to set it up. I run over 3,000 executions per month now on infrastructure that costs me about $8, and on n8n Cloud that same workload hits the Starter plan at $24. Same software. Different bill.

This guide walks through every step I followed. Docker Compose is the path (it is what n8n officially recommends), and it makes your updates a single command later.

What you need before starting

Four things. That's it.

A VPS (virtual private server). A small computer in a data center, rented by the month. Hetzner's CX22 plan costs about $8/month: 2 vCPUs, 4 GB RAM, 40 GB storage. DigitalOcean's Basic Droplet runs $6 per month: 1 vCPU, 1 GB RAM, 25 GB SSD. I'd recommend at least 2 GB of RAM for anything beyond basic testing because n8n's editor gets sluggish on 1 GB once your workflows grow past 20 or 30 nodes.

A domain name. SSL certificates (the padlock icon in your browser) require a domain, something like n8n.mydomain.com. I pointed mine at the server's IP address by adding an A record in my DNS settings. If that is unfamiliar: go to the domain registrar, find DNS settings, add a record with type "A", host name "n8n", and the server IP as the value.

Basic terminal comfort. This involves typing commands into a terminal window. Nothing exotic. I explain every command below, but knowing how to open a terminal, connect via SSH, and copy-paste text is the baseline.

Docker and Docker Compose on your VPS. Most providers offer Ubuntu images with Docker pre-installed. If the image does not include Docker, the install takes two commands: curl -fsSL https://get.docker.com | sh followed by sudo usermod -aG docker $USER.

No Kubernetes. No load balancers. No CI/CD pipelines.

The Docker Compose setup

Three files total: a docker-compose.yml that tells Docker which services to run, an .env file that stores your passwords, and a Caddyfile for SSL. Here is my walkthrough.

SSH into the server. Create a project directory:

mkdir ~/n8n-docker && cd ~/n8n-docker

That creates a folder called n8n-docker and moves into it.

Now the .env file, which holds all the secret values I did not want hardcoded into your compose file:

# .env

POSTGRES_USER=n8n

POSTGRES_PASSWORD=change_this_to_something_long_and_random

POSTGRES_DB=n8n

N8N_ENCRYPTION_KEY=also_change_this_to_a_long_random_string

N8N_HOST=n8n.yourdomain.com

N8N_PORT=5678

N8N_PROTOCOL=https

WEBHOOK_URL=https://n8n.yourdomain.com/

GENERIC_TIMEZONE=America/New_York

Two values matter more than anything else in this entire setup: POSTGRES_PASSWORD (your database password) and N8N_ENCRYPTION_KEY (what n8n uses to encrypt every stored credential: API keys, OAuth tokens, service passwords). Lose the encryption key and every credential in the instance becomes unreadable. I keep mine in a password manager. Not optional.

Now the main file: docker-compose.yml:

services:

postgres:

image: postgres:16

restart: unless-stopped

environment:

POSTGRES_USER: ${POSTGRES_USER}

POSTGRES_PASSWORD: ${POSTGRES_PASSWORD}

POSTGRES_DB: ${POSTGRES_DB}

volumes:

- postgres_data:/var/lib/postgresql/data

healthcheck:

test: ["CMD-SHELL", "pg_isready -U ${POSTGRES_USER}"]

interval: 10s

timeout: 5s

retries: 5

caddy:

image: caddy:2

restart: unless-stopped

ports:

- "80:80"

- "443:443"

volumes:

- ./Caddyfile:/etc/caddy/Caddyfile

- caddy_data:/data

- caddy_config:/config

n8n:

image: n8nio/n8n

restart: unless-stopped

environment:

DB_TYPE: postgresdb

DB_POSTGRESDB_HOST: postgres

DB_POSTGRESDB_PORT: 5432

DB_POSTGRESDB_DATABASE: ${POSTGRES_DB}

DB_POSTGRESDB_USER: ${POSTGRES_USER}

DB_POSTGRESDB_PASSWORD: ${POSTGRES_PASSWORD}

N8N_ENCRYPTION_KEY: ${N8N_ENCRYPTION_KEY}

N8N_HOST: ${N8N_HOST}

N8N_PORT: ${N8N_PORT}

N8N_PROTOCOL: ${N8N_PROTOCOL}

WEBHOOK_URL: ${WEBHOOK_URL}

GENERIC_TIMEZONE: ${GENERIC_TIMEZONE}

NODE_ENV: production

volumes:

- n8n_data:/home/node/.n8n

depends_on:

postgres:

condition: service_healthy

volumes:

postgres_data:

n8n_data:

caddy_data:

caddy_config:

Three services running together.

PostgreSQL stores your workflows, credentials, and execution history. Caddy handles SSL certificates automatically: no manual Let's Encrypt commands, no renewal cron jobs. n8n is the application itself. The depends_on block tells Docker: do not start n8n until PostgreSQL passes its health check. That prevents connection errors on first boot.

Why PostgreSQL instead of the default SQLite? SQLite locks the entire database file on every write, which causes slow editor performance and database lock errors in production. It also does not support n8n's queue mode for scaling. PostgreSQL handles concurrent writes, supports hot backups while n8n runs, and is what n8n officially recommends for anything beyond local testing.

One more file. The Caddyfile tells Caddy how to route traffic to n8n:

n8n.yourdomain.com {

reverse_proxy n8n:5678

}

That is the entire file. Two lines. Caddy reads the domain name, fetches an SSL certificate from Let's Encrypt, renews it before expiration, and forwards all HTTPS traffic to n8n on port 5678.

No certificate commands. No renewal cron jobs.

Start everything with one command:

docker compose up -d

The -d flag runs everything in the background. Wait about 30 seconds for PostgreSQL to initialize and n8n to boot, then open https://n8n.yourdomain.com in a browser. The n8n setup screen appears, asking to create an owner account.

Done.

Making it production-ready

A running instance is not a production instance.

I learned that distinction the hard way with a different tool years ago. Three things separate "it works on my server" from "I can trust this with business workflows": backups, updates, and security patches.

Backups. Your database holds every workflow, every credential, every execution log. Lose it and it's a rebuild from scratch. I run a daily backup: a cron job that creates a compressed PostgreSQL snapshot at 3 AM:

# Add to crontab (crontab -e)

0 3 * * * docker compose -f ~/n8n-docker/docker-compose.yml exec -T postgres pg_dump -U n8n n8n | gzip > ~/n8n-backups/n8n-db-$(date +\%Y\%m\%d).sql.gz

PostgreSQL's pg_dump creates consistent snapshots while n8n is running: one of the key reasons I chose it over SQLite.

I keep 30 days of backups locally and push an offsite copy once a week.

I also back up the n8n data volume, which stores custom nodes and binary file data:

0 4 * * * docker run --rm -v n8n-docker_n8n_data:/data -v ~/n8n-backups:/backup alpine tar czf /backup/n8n-data-$(date +\%Y\%m\%d).tar.gz -C /data .

The most important backup of all: the N8N_ENCRYPTION_KEY. I stored it in a password manager and printed a copy. If that key disappears, every stored credential becomes permanently unreadable.

Updates. n8n ships new versions frequently. My update process is three commands:

cd ~/n8n-docker

docker compose pull # downloads latest images

docker compose down # stops current containers

docker compose up -d # starts with new images

Your data survives because it lives in Docker volumes, not inside the containers themselves. Volumes persist across restarts. I check the release notes before pulling and I don't run blind updates: monthly cadence unless a security patch changes the timeline.

Security patches. This part is serious.

In January 2026, n8n disclosed CVE-2026-21858 (nicknamed "Ni8mare"): a CVSS 10.0 vulnerability allowing unauthenticated remote code execution on any exposed instance. Over 39,000 self-hosted servers were unpatched when The Hacker News broke the story. More critical CVEs followed in February: six disclosed on a single day.

Cloud users got patched automatically. Self-hosted users had to run the update commands themselves.

Many did not.

I subscribe to n8n's GitHub releases feed and the community forum. When a security advisory drops, I update the same day. That's the commitment: not "I'll get to it when I have time" but "I'll handle it today."

When self-hosting is not the right call

I'm not going to pretend this path works for everyone. Here's my honest breakdown.

Self-host if: your monthly executions exceed 2,500 (where Cloud's Starter plan caps out), data privacy matters to the business, 30 minutes per month for maintenance is acceptable, or running free software on owned infrastructure appeals to how you think about tools.

Use n8n Cloud if: your time is worth more than $16/month, thinking about servers sounds like a distraction from actual work, the team needs collaboration features (shared credentials, user roles), or guaranteed uptime without personal responsibility is a requirement.

The cost comparison is simple: n8n Cloud Starter at $24/month for 2,500 executions, my Hetzner VPS at about $8/month with unlimited executions. The $16 gap buys one thing: the responsibility of maintaining infrastructure, applying patches, and running backups.

At low volume (under 1,000 executions per month), Cloud makes sense because the maintenance time is not worth the savings. At high volume, the numbers flip: I've tracked deployments doing 150,000 monthly executions on a $50 DigitalOcean droplet, workloads that would cost $600 or more on equivalent SaaS platforms.

The real question is not about money. It's about whether I'll actually keep the server patched and backed up.

For me, the answer was yes. The 45-minute setup paid for itself in month one.

Pricing verified March 2026. n8n Cloud: n8n.io/pricing. Hetzner: hetzner.com/cloud. DigitalOcean: digitalocean.com/pricing. CVE data: The Hacker News, Rapid7, Canadian Cyber Centre (Jan-Feb 2026).

Crux helps businesses find the right automation platform for their specific problem. We don't sell automation tools. We help you pick the right one.

Related Posts

How to automate employee onboarding without code

I've onboarded over 200 people across four companies in 18 years. The worst one happened in 2019. A senior engineer showed up on Monday morning to an empty desk. No laptop. No email account. No Sla...

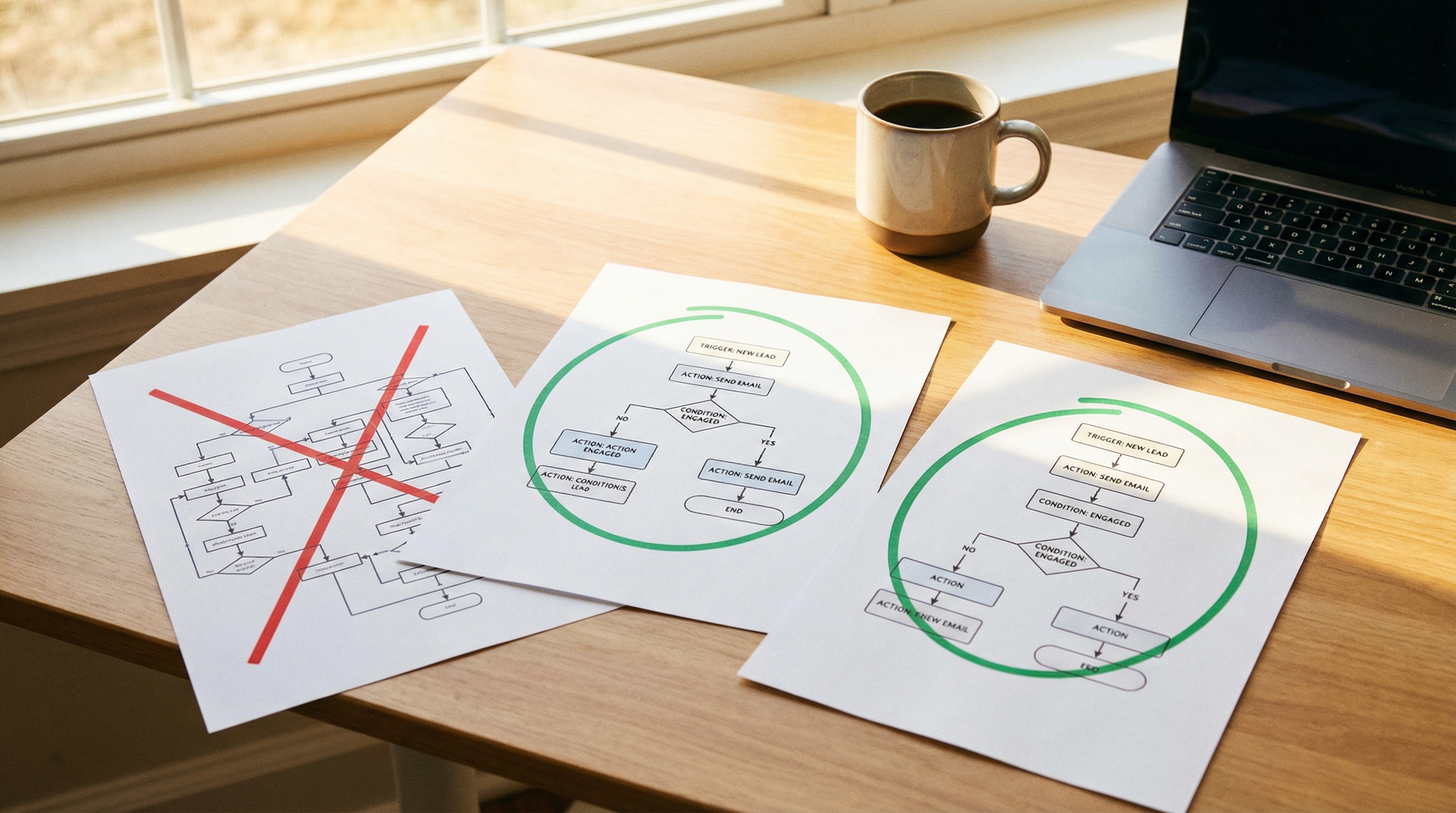

How to migrate from Zapier to Make or n8n

I migrated 47 Zaps last year. Took me three weeks, about 40 hours of actual work, and I broke two production workflows along the way. One was a Stripe webhook that silently stopped firing for 36 ho...

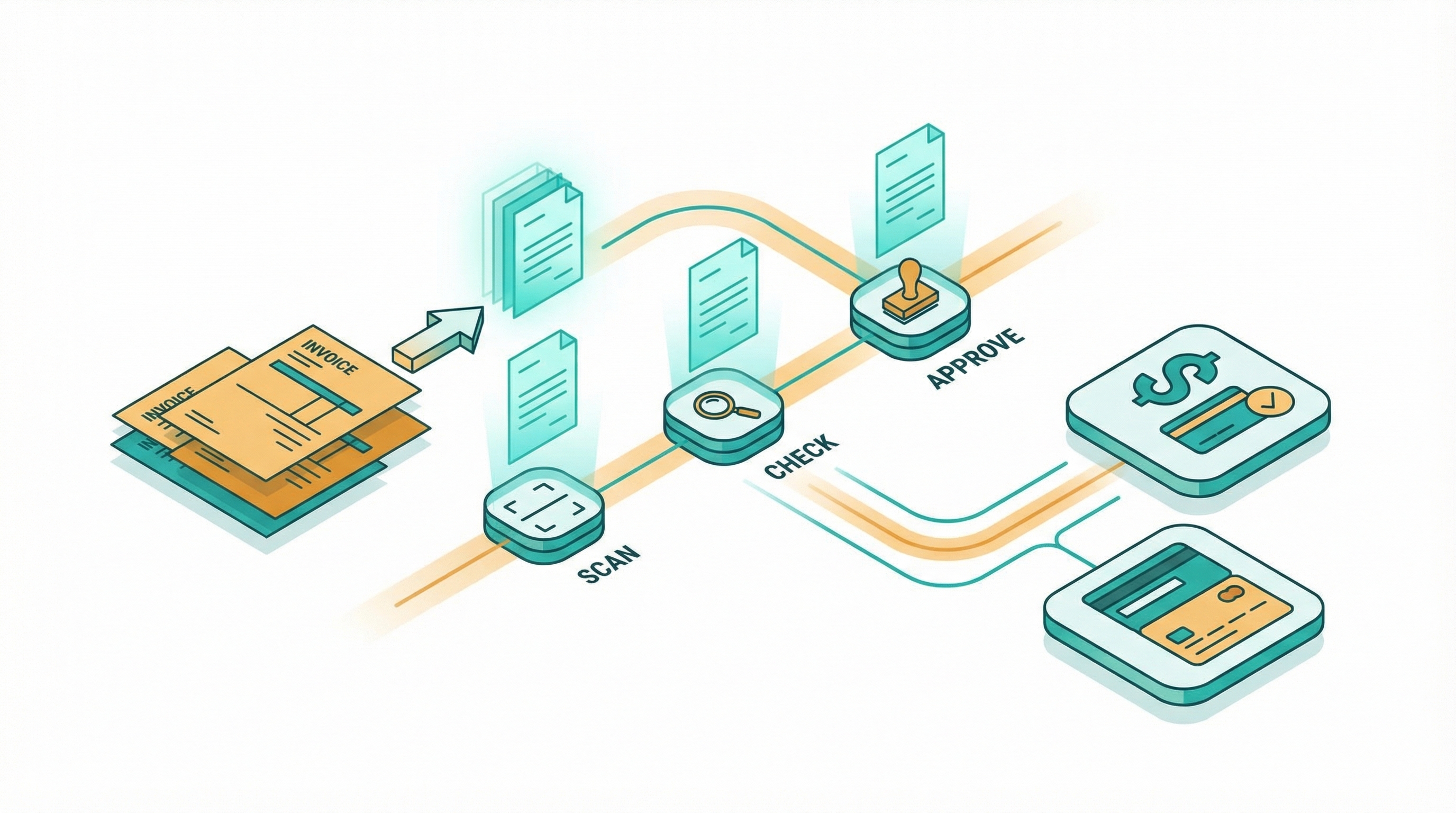

How to automate invoice processing without code

I spent two years manually processing invoices for a consulting business. Every Friday afternoon looked the same: download PDFs from email, retype line items into QuickBooks, cross-check amounts ag...